1 Meet the Penguins

In this workshop we will work through setting up a project and loading data. Once we have a curated and cleaned the dataset we can work on generating insights from the data.

As a biologist you should be used to asking questions and gathering data. It is also important that you learn all aspects of the research process. This includes responsible data management (understanding data files & spreadsheet organisation, keeping data safe) and data analysis.

In this chapter we will look at the structure of data files, and how to read these with R. We will also continue to develop reproducible scripts. This means that we are writing scripts that are well organised and easy to read, and also making sure that our scripts are complete and capable of reproducing an analysis from start to finish.

Transparency and reproducibility are key values in scientific research, when you analyse data in a reproducible way it means that others can understand and check your work. It also means that the most important person can benefit from your work, YOU! When you return to an analysis after even a short break, you will be thanking your earlier self if you have worked in a clear and reproducible way, as you can pick up right where you left off.

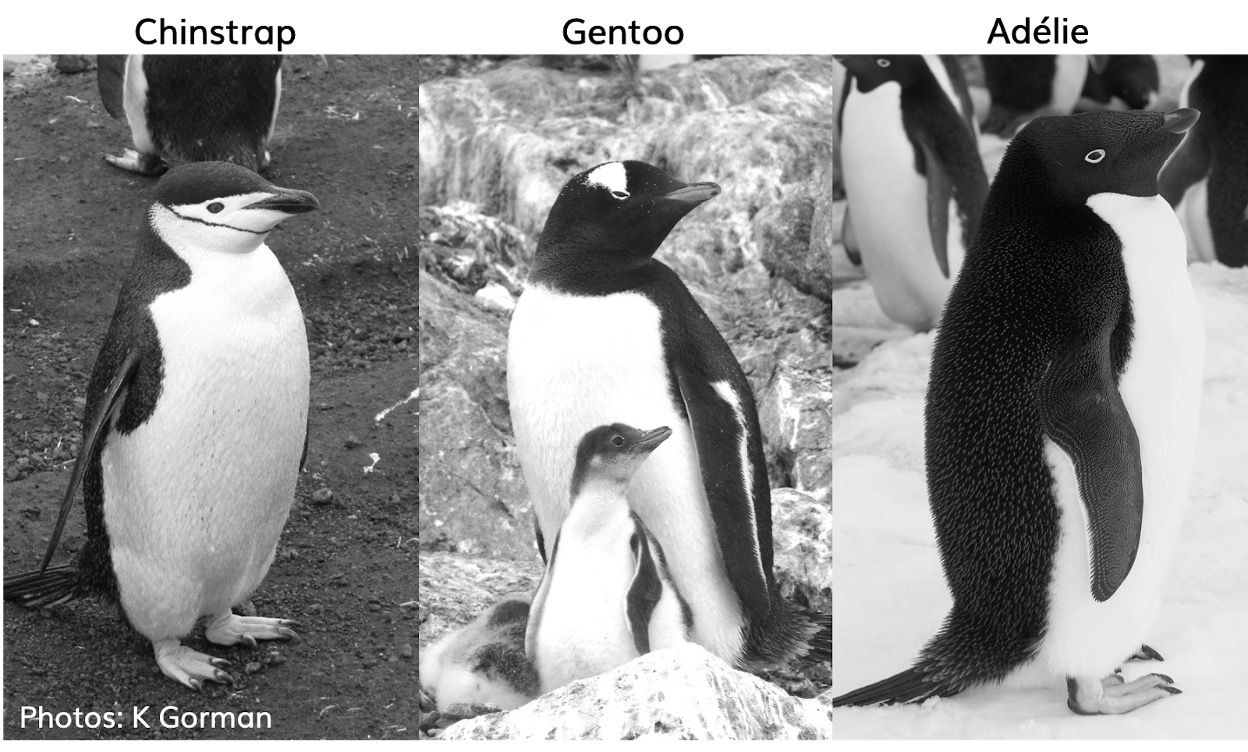

1.1 Meet the Penguins

This data, taken from the palmerpenguins (Horst et al. (2022)) package was originally published by Gorman et al. (2014). In our course we will work with real data that has been shared by other researchers.

The palmer penguins data contains size measurements, clutch observations, and blood isotope ratios for three penguin species observed on three islands in the Palmer Archipelago, Antarctica over a study period of three years.

These data were collected from 2007 - 2009 by Dr. Kristen Gorman with the Palmer Station Long Term Ecological Research Program, part of the US Long Term Ecological Research Network. The data were imported directly from the Environmental Data Initiative (EDI) Data Portal, and are available for use by CC0 license (“No Rights Reserved”) in accordance with the Palmer Station Data Policy. We gratefully acknowledge Palmer Station LTER and the US LTER Network. Special thanks to Marty Downs (Director, LTER Network Office) for help regarding the data license & use. Here is our intrepid package co-author, Dr. Gorman, in action collecting some penguin data:

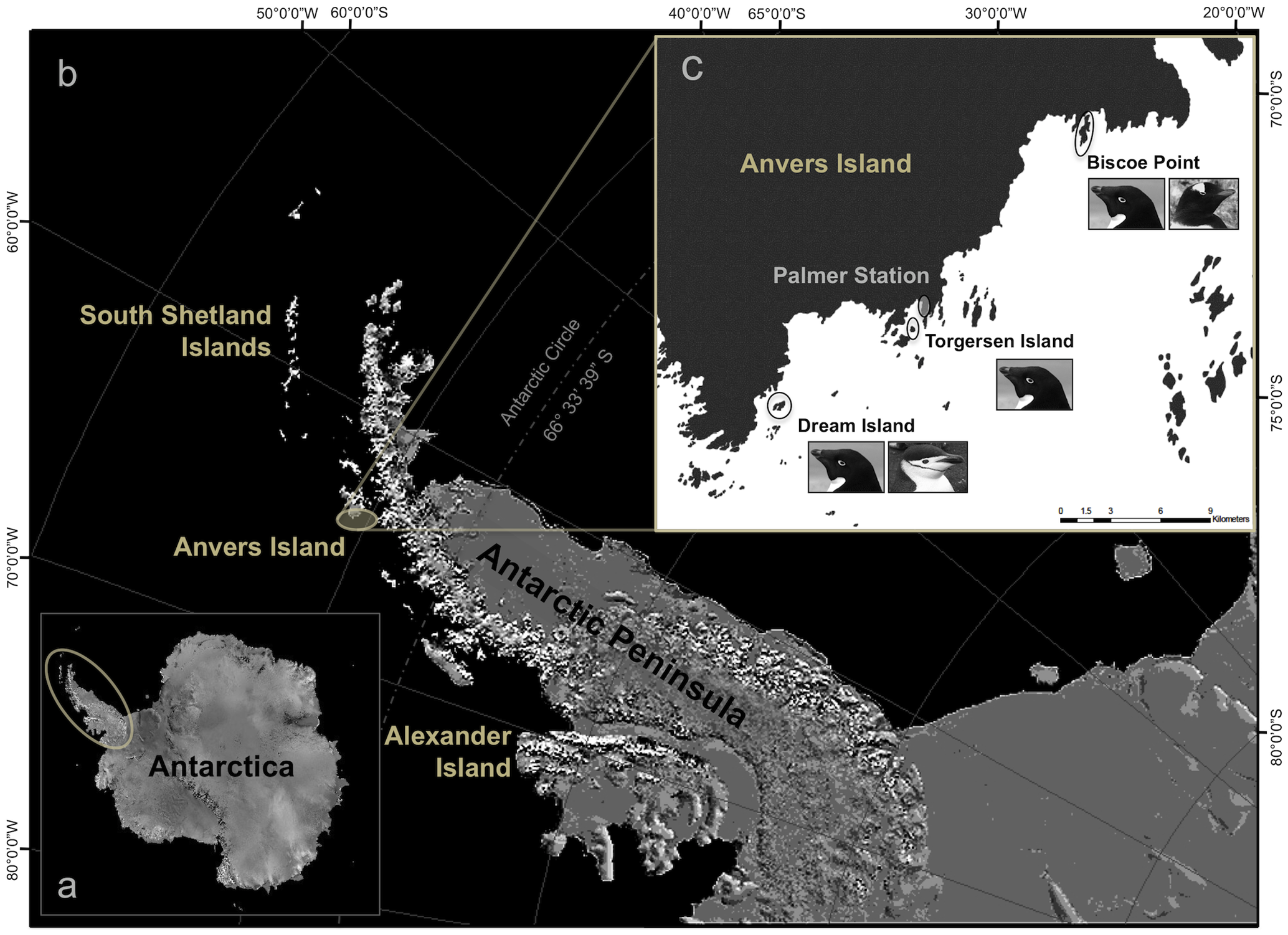

Here is a map of the study site

1.2 Activity 1: Organising our workspace

Before we can begin working with the data, we need to do some set-up.

Go to RStudio Cloud and make a new R Project - from template: 5027A

Call your project ‘Penguins’

-

Create the following folders using the + New Folder button in the Files tab

- data/raw

- data/processed

- results

- scripts

R is case-sensitive so type everything EXACTLY as printed here

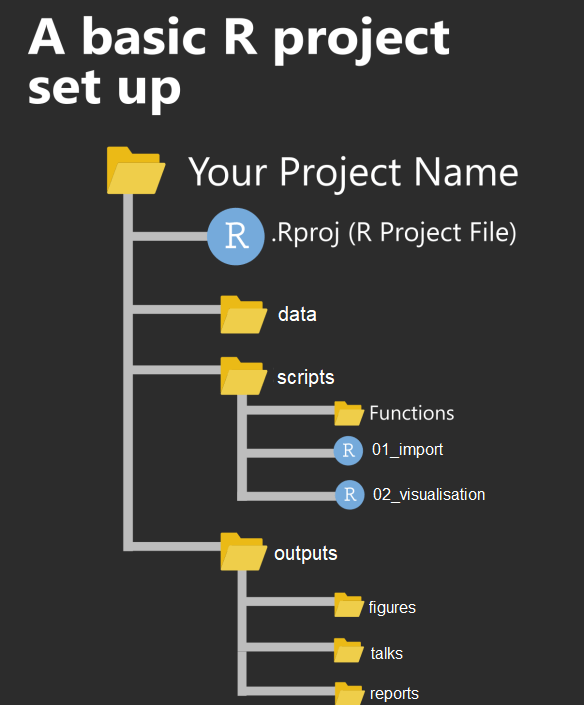

2 Check your directory structure

my_project.RProj/

|- data/

| |- raw/

| |- penguins_raw.csv

| |- processed/

|- scripts/

|- results/

Having these separate subfolders within our project helps keep things tidy, means it’s harder to lose things, and lets you easily tell R exactly where to go to retrieve data.

The next step of our workflow is to have a well organised project space. RStudio Cloud does a lot of the hard work for you, each new data project can be set up with its own Project space.

We will define a project as a series of linked questions that uses one (or sometimes several) datasets. For example a coursework assignment for a particular module would be its own project, a series of linked experiments or particular research project might be its own project.

A Project will contain several files, possibly organised into sub-folders containing data, R scripts and final outputs. You might want to keep any information (wider reading) you have gathered that is relevant to your project.

Check out Appendix B for more information on filepaths and data management.

Within this project you will notice there is already one file .Rproj. This is an R project file, this is a very useful feature, it interacts with R to tell it you are working in a very specific place on the computer (in this case the cloud server we have dialed into). It means R will automatically treat the location of your project file as the ‘working directory’ and makes importing and exporting easier. More on projects can be found in the R4DS book (@-R4DS).

It is very important to NEVER to move the .Rproj file, this may prevent your workspace from opening properly.

2.1 Activity 2: Access our data

Now that we have a project workspace, we are ready to import some data.

Use the link below to open a page in your browser with the data open

Right-click Save As to download in csv format to your computer (Make a note of where the file is being downloaded to e.g. Downloads)

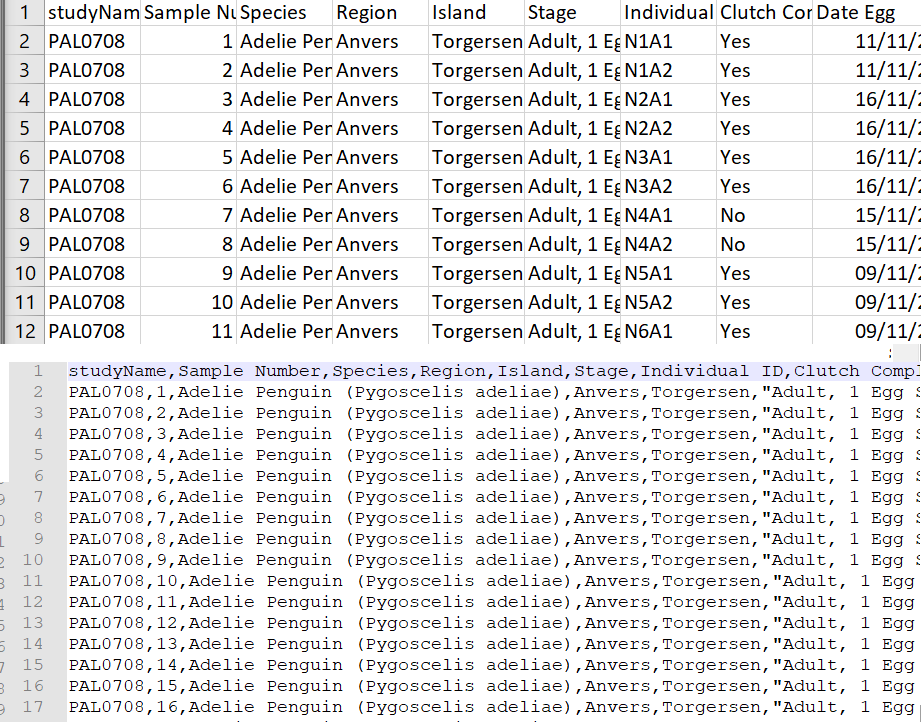

In raw format, each line of a CSV is separated by commas for different values. When you open this in a spreadsheet program like Excel it automatically converts those comma-separated values into tables and columns.

2.2 Activity 3: Upload our data

The data is now in your Downloads folder on your computer

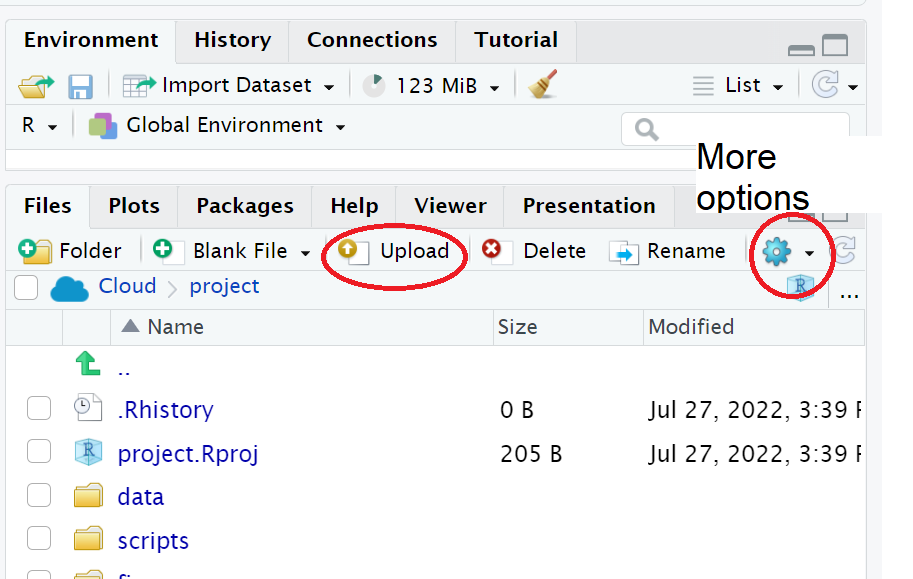

We need to upload the data to our remote cloud-server (RStudio Cloud), select the upload files to server button in the Files tab

Put your file into the data folder - if you make a mistake select the tickbox for your file, go to the cogs button and choose the option Move.

2.3 Activity 4: Make a script

Let’s now create a new R script file in which we will write instructions and store comments for manipulating data, developing tables and figures. Use the File > New Script menu item and select an R Script.

Add the following:

#___________________________----

# SET UP ----

# An analysis of the bill dimensions of male and female

# Adelie, Gentoo and Chinstrap penguins

# Data first published in Gorman, KB, TD Williams, and WR Fraser.

# 2014.

# “Ecological Sexual Dimorphism and Environmental Variability

# Within a Community of Antarctic Penguins (Genus Pygoscelis).”

# PLos One 9 (3): e90081.

# https://doi.org/10.1371/journal.pone.0090081.

#__________________________----Why do we need scripts to be organised?

Scripts are a human readable record of our analysis - we need them to be organised, consistent, and well-documented. See Section C.3 for general tips on how to do this.

Well organised scripts are useful in that provide a reference for what you did - think of them as the analysis equivalent of a lab notebook. The more detail provided in the form of comments, the more context we have. The more organised they are, the easier it is to find the sections we need to review. Just like a lab notebook they should be written as though someone else will be reading them.

Next load the following add-on package to the R script, just underneath these comments. Tidyverse isn’t actually one package, but a bundle of many different packages that play well together - for example it includes ggplot2 a package for making figures (Wickham (2023)).

Add the following to your script:

Note you may need to run

install.packages()to download and set up packages the first time you make a new project.

Save this file inside the scripts folder and call it 01_import_penguins_data.R

Click on the document outline button (top right of script pane). This will show you how the use of the visual outline

Allows us to build a series of headers and subheaders, this is very useful when using longer scripts.

2.4 Activity 5: Read in data

Now we can read in the data. To do this we will use the function readr::read_csv() that allows us to read in .csv files. There are also functions that allow you to read in .xlsx files and other formats like .json, however in this course we will only use .csv files.

We are also using here::here(), Müller (2020). The here package helps you load and save files in your project without worrying about changing your working directory, by always starting from the project’s main folder.

First, we will create an object called

penguins_rawthat contains the data in thepenguins_raw.csvfile.Add the following to your script, and check the document outline:

| studyName | Sample Number | Species | Region | Island | Stage | Individual ID | Clutch Completion | Date Egg | Culmen Length (mm) | Culmen Depth (mm) | Flipper Length (mm) | Body Mass (g) | Sex | Delta 15 N (o/oo) | Delta 13 C (o/oo) | Comments |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PAL0708 | 1 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N1A1 | Yes | 11/11/2007 | 39.1 | 18.7 | 181 | 3750 | MALE | NA | NA | Not enough blood for isotopes. |

| PAL0708 | 2 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N1A2 | Yes | 11/11/2007 | 39.5 | 17.4 | 186 | 3800 | FEMALE | 8.94956 | -24.69454 | NA |

| PAL0708 | 3 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N2A1 | Yes | 16/11/2007 | 40.3 | 18.0 | 195 | 3250 | FEMALE | 8.36821 | -25.33302 | NA |

| PAL0708 | 4 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N2A2 | Yes | 16/11/2007 | NA | NA | NA | NA | NA | NA | NA | Adult not sampled. |

| PAL0708 | 5 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N3A1 | Yes | 16/11/2007 | 36.7 | 19.3 | 193 | 3450 | FEMALE | 8.76651 | -25.32426 | NA |

| PAL0708 | 6 | Adelie Penguin (Pygoscelis adeliae) | Anvers | Torgersen | Adult, 1 Egg Stage | N3A2 | Yes | 16/11/2007 | 39.3 | 20.6 | 190 | 3650 | MALE | 8.66496 | -25.29805 | NA |

Note the differences between read.csv() and read_csv(). We covered this in differences between tibbles and dataframes - here most obviously is a difference in column names.

2.5 Activity: Check your script

#___________________________----

# SET UP ----

# An analysis of the bill dimensions of male and female

# Adelie, Gentoo and Chinstrap penguins

# Data first published in Gorman, KB, TD Williams, and WR Fraser.

# 2014.

# “Ecological Sexual Dimorphism and Environmental Variability

# Within a Community of Antarctic Penguins (Genus Pygoscelis).”

# PLos One 9 (3): e90081.

# https://doi.org/10.1371/journal.pone.0090081.

#__________________________----

# PACKAGES ----

library(tidyverse) # tidy data packages

library(here) # organised file paths

library(janitor) # cleans variable names

#__________________________----

# IMPORT DATA ----

penguins_raw <- read_csv(here("data", "raw", "penguins_raw.csv"))

# check the data has loaded, prints first 10 rows of dataframe

penguins_raw

#__________________________----2.6 Activity: Test yourself

Question 1. In order to make your R project reproducible what filepath should you use?

Question 2. Which of these would be acceptable to include in a raw datafile?

Question 3. What should always be the first set of functions in our script? ?()

Question 4. When reading in data to R we should use

Question 5. What format is the penguins_raw data in?

Each column is a unique variable and each row is a unique observation so this data is in a long (tidy) format

Question 6. The working directory for your projects is by default set to the location of?

Question 7. Using the filepath "data/penguins_raw.csv" is an example of

Question 8. What operator do I need to use if I wish to assign the output of the read_csv function to an R object (rather than just print the dataframe into the console)?